Porting an existing single producer/single consumer concurrent queue into an IPC mechanism via memory mapped files and getting 135 million messages throughput in pure Java.

In my previous post I covered a single producer/consumer queue developed and shared by Martin Thompson capable of delivering an amazing 130M messages per second. The queue he delivered is a great tool for communicating between threads, but sometimes communicating between threads is not enough. Sometime you need to leave your JVM and go out of process. Inter Process Communications (IPC) is a different problem to inter thread communications, can it be cracked by the same approach?

IPC, what's the problem?

Inter Process Communication is an old problem and there are many ways to solve it (which I will not discuss here). There are several attractions to specialized IPC solutions for Java:

- Faster than socket communication.

- An out of process integration option with applications written in other languages.

- A means of splitting large VMs to smaller ones improving performance by allowing GC and JIT specialization.

Off the heap we go!

To do IPC we must go off heap. This has several implications for the queue, most importantly references are not supported. Also note persistence to and from the queue is required, though one could extend my implementation to support a zero copy interaction where a struct is acquired, written and committed instead of the offer method, and similarly acquired, read and finally released instead of the poll method. I plan to make several flavours of this queue to test out these ideas in the near future.

My IPC queue uses a memory mapped file as a means of acquiring a chunk of shared memory, there is no intention to use the persisted values though further development in that direction may prove interesting to some. So now that I got me some shared memory, I had to put the queue in it.

I started by laying out the queue counters and cached counters. After realizing the counters need to be aligned to work properly I learnt how to align memory in Java. I went on to verify that aligned memory offers the guarantees required for concurrent access. Quick summary:

This is how the memory layout worked out:

- aligned access means writing data types to addresses which divide by their size.

- unaligned access is not atomic, which is bad for concurrency :(

- unaligned access is slow, which is bad for performance :(

- unaligned access may not work, depending on OS and architecture. Not working is very bad :(

This is how the memory layout worked out:

To illustrate in glorious ASCII graphics (each - is a byte), this is what the memory layout looks like when broken into cache lines:

|--------|--------|--------|head....|--------|--------|--------|--------|

|--------|--------|--------|tailCach|--------|--------|--------|--------|

|--------|--------|--------|tail----|--------|--------|--------|--------|

|--------|--------|--------|headCach|--------|--------|--------|--------|

|int1int2|int3int4|int5int6|etcetcet|cetcetce|tcetcetc|etcetcet|cetcetce|

...

...

I played around with mixing off heap counters with on heap buffer but in the interest of brevity I'll summarize and say the JVM does not like that very much and the end result performance is not as good as all heap/off-heap solutions. The code is available with everything else.

Once alignment and memory layout were sorted I had to give up the flexibility of having reference pointers and settle for writing my data (an integer) directly into the memory. This leaves my queue very restrictive in it's current form. I intend to revisit it and see what I can do to offer a more extendable API on top of it.

Let me summarize the recipe at this point:- Create a memory mapped file large enough to hold:

- 4 cache lines for counters/cached counters.

- 4 bytes(per integer) * queue capacity (must be a power of 2).

- 1 spare cache line to ensure you can align the above to the cache line.

- Get a mapped byte buffer, which is a direct byte buffer on top of the mapped memory.

- Steal the address and get the contained aligned byte buffer.

- Setup pointers to the counters and the beginning of the buffer

- Replace use of natural counters with off heap counters accessed via Unsafe using the pointers.

- Replace use of array with use of offset pointers into buffer and Unsafe access.

- Test and debug until you work out the kinks...

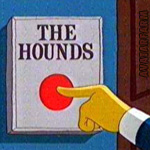

Smithers, release the hounds

Smithers, release the hounds

Here are the numbers for using the different implementations in process:| Implementation/Affinity | Same core | Cross core | Cross socket |

| P1C1QueueOriginal3 | 110M | 130M | 19M |

| P1C1OffHeapQueue | 130M | 220M | 200M |

| P1C1QueueOriginalPrimitive | 124M | 220M | 215M |

Confused? Let me explain. First line is the measurements taken for the original queue. Similar to what was presented in prev. post, though I saw a slight improvement in the results with increasing the compile threshold to 100000.

The second line is my offheap implementation of same algorithm. It is significantly faster. This is not IPC yet, this is in process. The reason it is faster is because data is inlined in the queue, which means that by loading an entry in the queue we get the data as opposed to a reference to the data. Getting a reference is what you get when you have and Object[] array. The array holds the references and the data is elsewhere, this seems to make it more painful as we get further from the producer.

The last entry is a mutation of P1C1QueueOriginal3 into a primitive array backed queue to compare performance like for like. As you can see this displays very similar results to the off heap implementation supporting the theory that data in-lining is behind the observed performance boost.

The lesson here is an old one, namely that pointer chasing is expensive business further amplified by the distance between the producing CPU and consuming CPU.

The off-heap queue can offer an alternative to native code integration as the consuming thread may interact directly with the off-heap queue and write results back to a different off-heap queue.

Running a similar benchmark adapted to use a memory mapped file as the backing DirectByteBuffer for the off-heap queue we get:

same core - ops/sec=135M

across cores - ops/sec=98M

across sockets - ops/sec=25M

JOY! a pure Java IPC that gives you 135M messages per second is more throughput then you'd get with most commercial products out there. This is still not as fast as the same queue in process and I admit I'm not sure what the source of the performance difference is. Still I am quite happy with it.

A few notes/observations from the experimentation process:

- I got a variety of results, stabilizing around different average throughputs. I chose the best for the above summary and plan to go into detail about the results in the near future.

- The JVM was launched with: -XX:+UseCondCardMark -XX:CompileThreshold=100000

- Removing the Thread.yield from the producer/consumer loops improved performance when running on the same core, but made it worse otherwise.

- Moving the queue allocation into the test loop changes the performance profile dramatically.

- I've not had time to fully explore the size of the queue as a variable in the experiment but the little I've done suggests it makes a difference, choose the right size for your application.

This is the first post I have read in the series -- cool stuff! I've heard of using off heap techniques for caching and its good to see other uses.

ReplyDeleteI was curious... How would this compare to a C/C++ implementation? Does Java offer any advantages that C/C++ would not have? Seems like those languages would be better suited for your goals.

C/C++ would certainly make dealing directly with memory easier. Apart from that I'm not sure...

DeleteI plan to create a C++ port and see how it fairs. In particular I'd like to see how this works as a method of in process/out of process integration for Java with native code. When I do, I'm sure I'll find out ;)

As for goals, I want a fast queue, and I like Java. Writing in in C++ will solve one but leave the other unsatisfied. There is allot of low latency/soft real time systems being written in Java that can benefit from the above approach (I think).

Glad you liked it.

The main advantage of using Java is that you can easily write 90%+ in normal Java which is more efficient and effective if you have developers of mixed ability.

DeleteWhat is the guarantee that the writes to shared memory/mem mapped files are visible to the other cpus?

ReplyDelete[1] http://stackoverflow.com/questions/7061910/when-does-an-o-sync-write-become-visible-in-the-pagecache-mmapd-file

[2] http://milek.blogspot.com/2010/12/linux-osync-and-write-barriers.html

reply below

DeleteI treat the mapped memory region as just that and the assumption carries to the use of Unsafe.put* as a means of writing to that memory. I make no write to disc guarantees. I never sync, or in fact do anything with the mapped file. I believe that up to a point the queue is persistent, but I am not pursuing the extension of that blief to any guarantees. It's a proof of concept, not a framework...

ReplyDeleteMy understanding (validated by experimentation so far, no deep dive into the texts in this area) is that shared memory can be treated as normal memory, thus following the same rules which apply across the board for CPU/memory interaction and maintaining correctness.

If this sounds weakly stated... it is, I had no time to do as much testing as one would require before putting this into production. But I observe it works, and that in itself is interesting.

Please enlighten me further if you believe there is an issue I am missing here, or if you know any part to not work.

Thanks :)

Nitsan, I have not run extensive tests and so I not been able to reproduce those edge cases (if any). While doing some reading on shared memory I came across those "visibility" issues. Will let you know if I find anything concrete.

DeleteThanks. Keep up the good work on your blog.

In P1C1OffHeapQueue's poll/offer you have...

Deletefinal int e = UnsafeAccess.unsafe.getInt(offset);

UnsafeAccess.unsafe.putInt(offset, e.intValue());

I'm wondering why you are not using getIntVolatile/putIntVolatile here. I would have thought there was no guarantee of visibility for those int writes (even in the same VM), though I may be missing something.

Thanks

The lines you quote are the counterparts to the array read/write from the original implementation. They are indeed plain read/writes.

DeleteThe ordering we require is guaranteed by the volatile read of the counters (LOADLOAD barrier: if TAIL is 10 then I get happens-before relationship to the events before it was set to 10) and the lazySet/putOrdered to the counters (STORESTORE barrier: all the stores which happen before cannot be re-ordered, so any write made to the array before incrementing TAIL is visible)

Think I follow that (it's more complicated than I thought, relying on the semi-volatile lazySet if I undersand). I've added a checksums (on i, which I passed to the queue instead of TEST_VALUE) and indeed the consumer gets the same checksum.

DeleteNitsan - What mechanism were you using to publish the memory address to the application that did not create the byte buffer? You were using this for IPC, correct?

ReplyDeleteThey are using the same memory mapped file, i.e. same memory region --> same address.

DeleteAh, that's what I was missing - this is a service facilitated by the operating system. Now I've learned about a new dusty corner of Java, thanks! :-)

ReplyDeleteI believe you are in luck and have in fact learnt about a new dusty corner which works for Java/C/C++ and others where you can strip the address from a memory mapped file :-)

DeleteWhat I have found working with a library which also supports IPC between processes over memory mapped files is that while it is faster than most developers need it is also much too low level for them. I would be interested in your ideas on how to make this sort of coding more accessible.

ReplyDeleteBTW This sort of thing work in production too ;)

It's a challenge to make it more accessible, ultimately a code generation tool might be the way forward here compiling struct/type mappings and 'data lanes' out of defined event types. This would hide the thorny/risky underbelly of the construct while allowing people to use it.

DeleteI would disagree with anything being too fast :) the faster this part of the codebase is, the more slack you have elsewhere. I'd agree that the speed might not be worth the novel approach to certain people/applications/organisations.

Happy to hear this approach is not without real world validation :)

On the subject of the queue being "very restrictive": Wouldn't it be possible to offer() and poll() for byte[] arrays instead of Integer?. One would prepend a 4 byte int for the array length and instead of incrementing head and tail by 1, one could increment by 4 + array.length. Would that change anything?

ReplyDeleteYou could, but in it's current form it's not on offer... You could look at it as a stream of integers, on top of which you are free to serialise your data in anyway you like. I would not got for a byte[] poll() as that would require creating the byte[] and copying to it. Something along the lines of some flyweight object which hides the fact that it is backed by a offheap chunk of memory would probably be better.

DeleteIf you are looking to deal with blobs then what you describe would work, but note there are changes required both on writing and on readin to the queue as you are effectively changing the event size, and thus the shift in memory to find the next slot available/ready. It is definitely possible, please fork the code and have a go, I'd be happy to help :-)

Yeah, I see now what you mean (still struggling with the offset calculation and overflowing long counters). Even for the special case of variable but power of 2 sized arrays (payload + length info), it's not that easy ;)

DeleteFor what it's worth, it works - but only if addresses are aligned on 8 byte boundaries. I.e., if (array.length + 4) is a multiple of 8. Otherwise I'm running into visibility issues between the two processes. Me stupid, I should have known this beforehand.

DeleteAwesome :-)

DeleteIf you want to share the code I'd be interested to see where you took it.

In any case, glad if my work contributed to yours, always a pleasure.

I'm away on leave now. Once I'll be back I'll give the code a little polishing and run more tests before I share it. I already found a bug after my last notice when I thought it was finished ;) I'll keep you informed. BTW, thanks for your great articles!

DeleteThis comment has been removed by the author.

ReplyDeleteJust found your blog, great stuff, thanks.

ReplyDeleteOne thing in this post made me a bit confused though - you write "I went on to verify that aligned memory offers the guarantees required for concurrent access". But your conclusion in the referenced post was "The take away from the above is that offheap storage should be treated as if atomicity is not guaranteed". Does aligned memory indeed offer the guarantees required? Or maybe it does not matter as long as putOrderedLong and getLongVolatile work as exepexted? Thanks

Glad you like it :)

DeleteThe conclusion is indeed misleading and I shall attempt to correct the wording. Aligned access is atomic on x86, this is why the IPC works correctly. If you use Unsafe directly to manipulate memory and have taken care to ensure alignment then you should be fine, I would expect a high degree of portability there. If you use DirectByteBuffer then any number of things could change in the implementation (which currently boils down to an Unsafe.put*()) which would make the operation non-atomix. Surprisingly enough on HeapByteBuffer there is no atomicity beyond putByte().

This post is eye opening! One thing I was wondering about was a statement you made about the JVM not liking off-heap counters with on heap data and vice-versa. Could you elaborate on that? Mostly just curious where things fell over.

ReplyDeleteIt's been a while since I wrote the post, so had to scan through the code variants again. I've experimented with taking the counters offheap while maintaining the data on heap, it proved unhelpful and as my final goal was the offheap queue I didn't dig further.

DeleteI'm curious now to see how it would behave with the cached counters on heap and the rest offheap, or with an FFBuffer like algorithm...

Will come back to this topic at some point :-) glad you found it interesting

Yes, exactly! The one piece that felt unnatural to me was having the cached counters as part of the off heap structure.

ReplyDeleteIt's a cool article! Thx! BTW, what's the advantage and disadvantage of using off-heap memory in Java

ReplyDeleteI enjoy your blog. I have played with unsafe for JSON parsing, and I have been playing around with queues to optimize performance.

ReplyDeleteThanks. Big fan.

--Rick Hightower